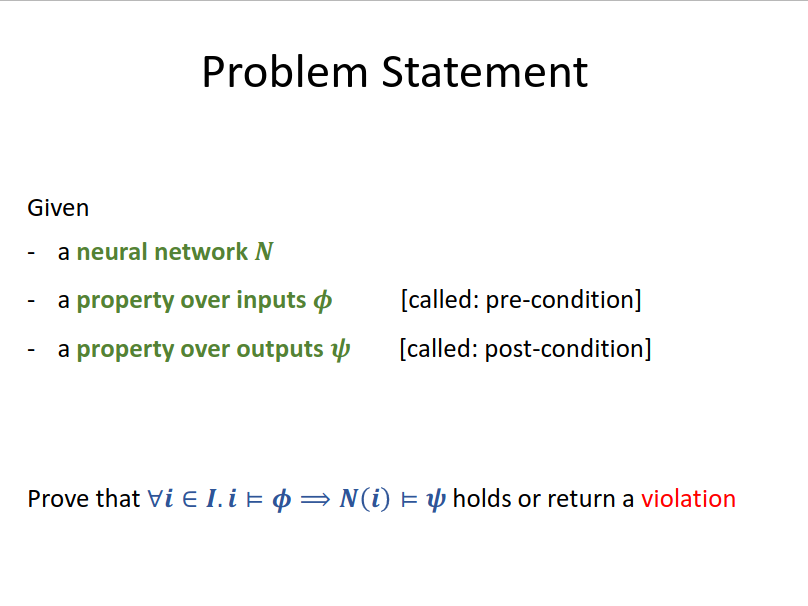

Certification of Neural Networks

e.g. to prove Robustness (NN) of a network.

For neural networks (which are generally loop-free), it is possible to have both sound and complete certification methods (but these may not scale to realistic networks).

Incomplete Methods

These methods including convex relaxation, are usually scalable.

Bound propagation

Starting with pre-condition , we push through the NN, computing an over-approximation of the effects of each layer.

This method needs two key parts:

- The convex approximation (i.e. shape): Box, Zonotope, Polyhedra

- The abstract transformer of the shapes.

A trade-off between approximation and speed exists: transformers for Box are fast, but imprecise, while Polyhedra is more precise but in exponential complexity.

Box

Box Relaxation

Box Relaxation

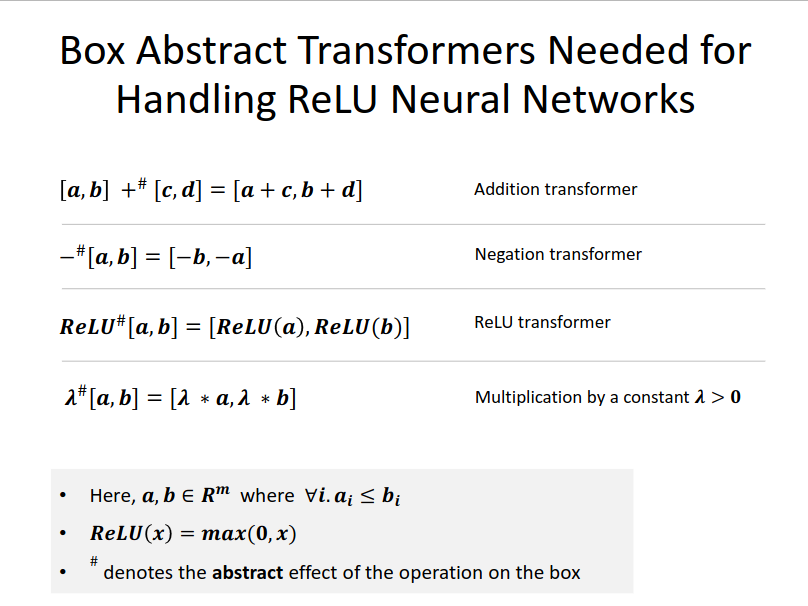

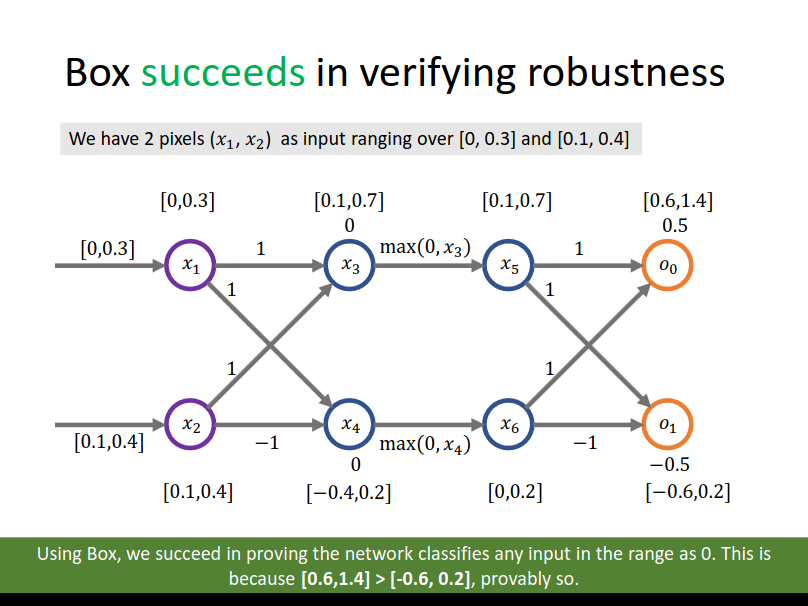

The simplest and most efficient convex relaxation. It is basically interval domain.

The transformers are straight-forward:

Box transformer is not exact. Nevertheless, it might be enough to verify the post-condition.

指向原始笔记的链接

Zonotope

Zonotope Relaxation

Zonotope Relaxation

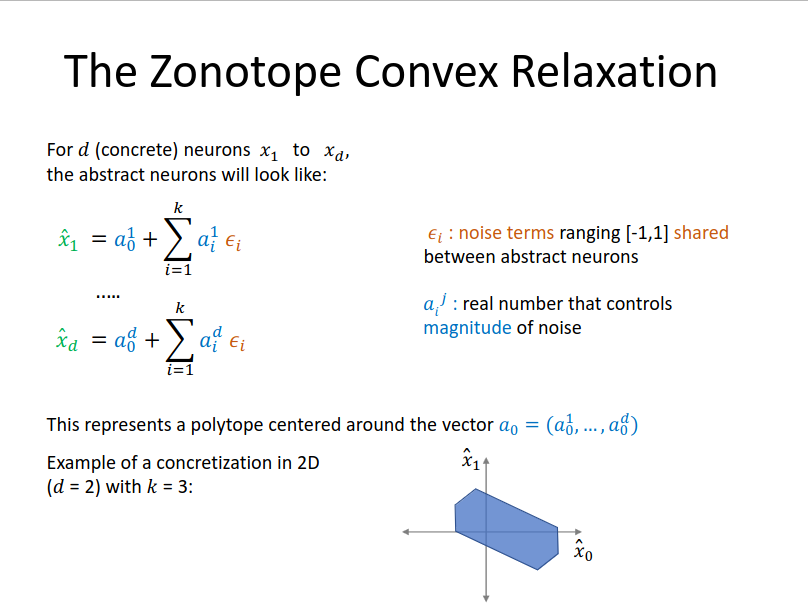

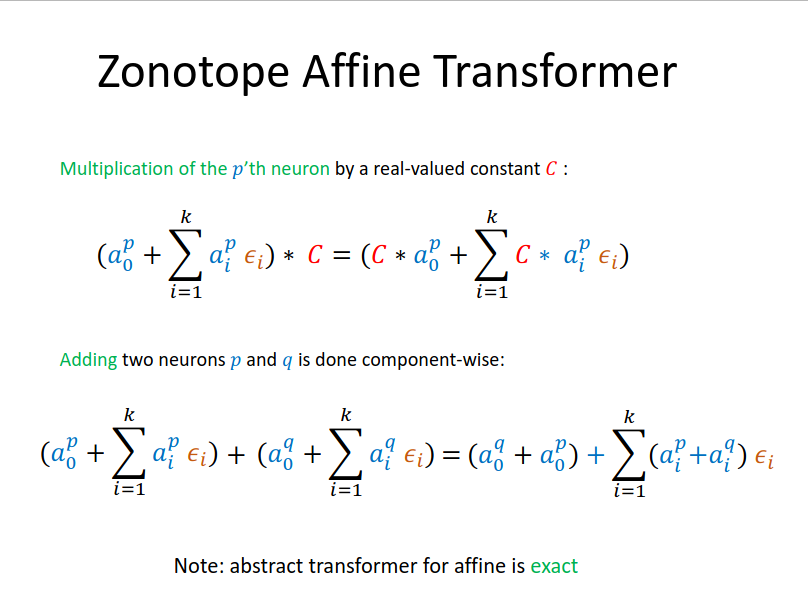

Zonotope is a convex relaxation whose affine transformer will not lose precision (that is, it will be exact); however, its ReLU transformer will again lose some precision.

Zonotope has been shown effective in both verification and provable training.

Shape: each variable is captured in an affine form, and can be related through shared parameters. Formally:

Transformers:

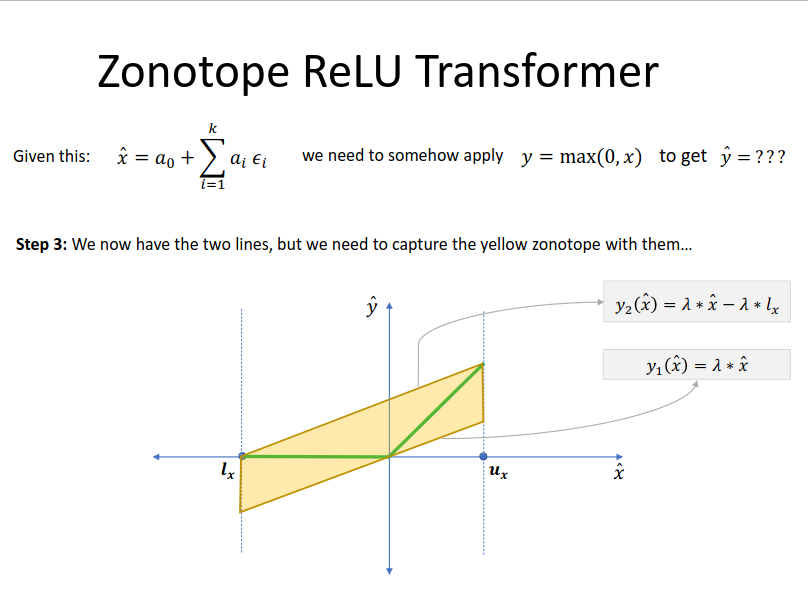

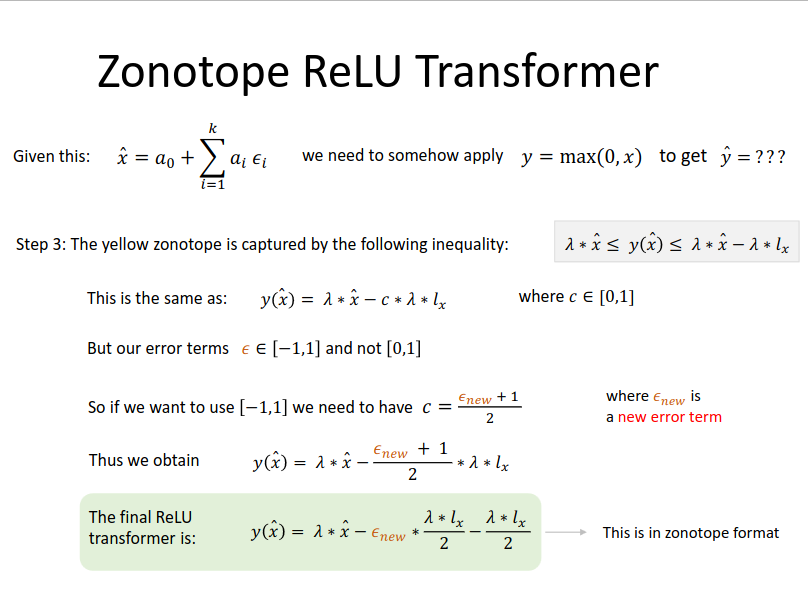

For ReLU transformer, first compute the lower and upper bound for the input. If crosses the boundary , then:

with

And then transform to Zonotope format:

Related

指向原始笔记的链接

DeepPoly

DeepPoly Relaxation

DeepPoly Relaxation

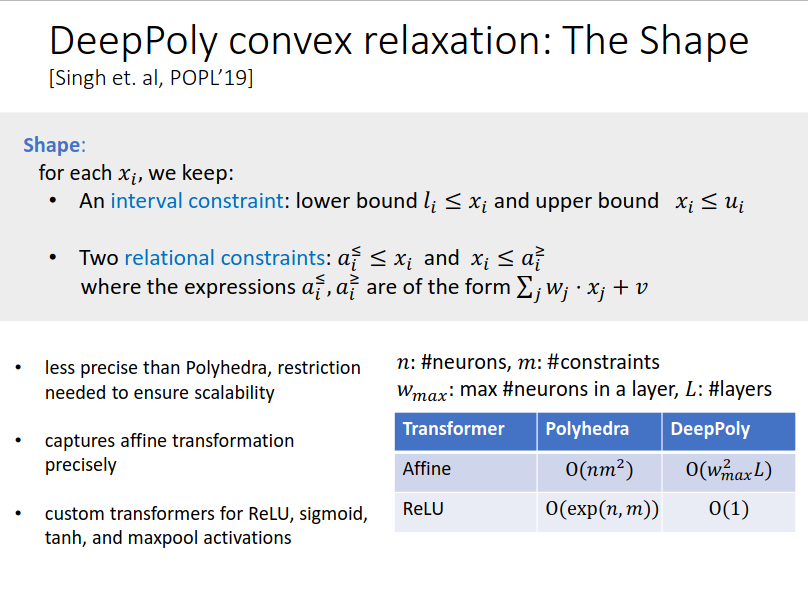

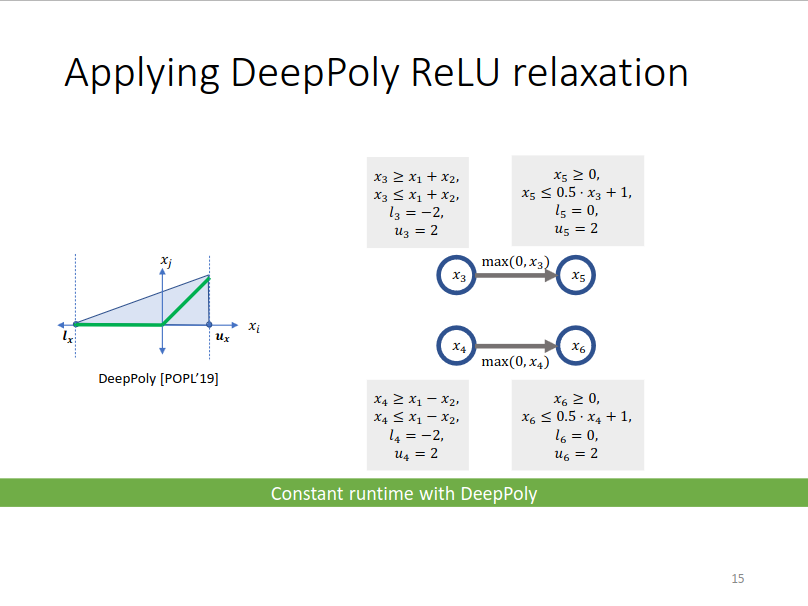

Like Zonotope, DeepPoly is exact for affine and is its ReLU transformer produces a smaller area than the Zonotope ReLU transformer.

Shape:

ReLU transformer:

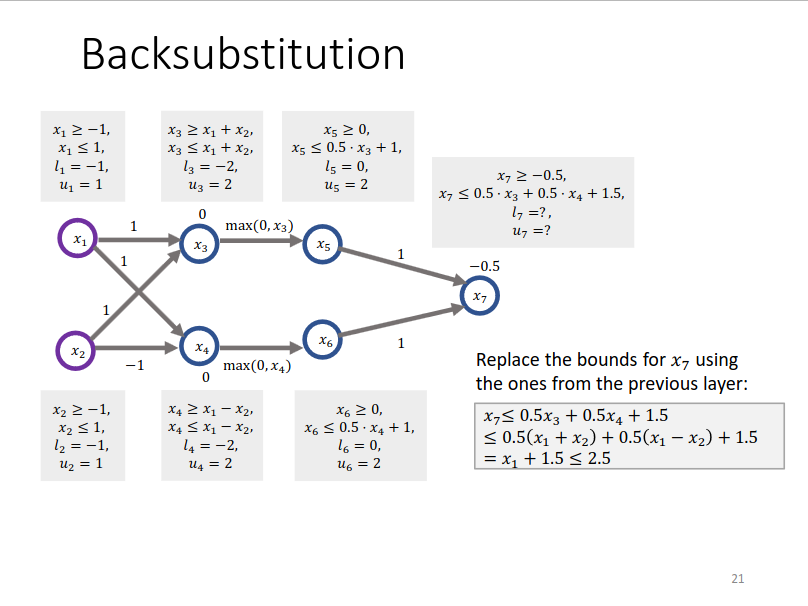

When computing bounds, backsubstitution is utilized for tighter bound:

指向原始笔记的链接

Complete Methods

Mixed Integer Linear Program (MILP)

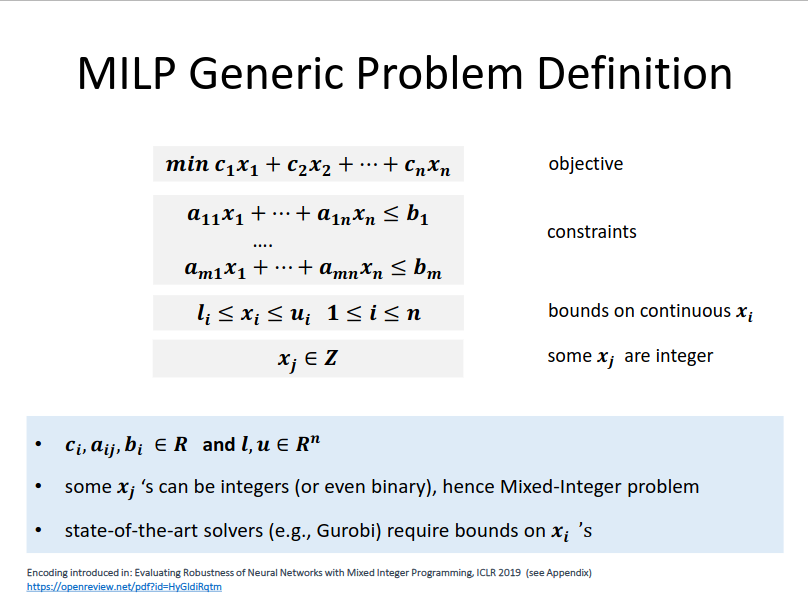

Solving MILP is a NP-complete problem. The general definition of a MILP constraint is:

Encoding NN as MILP

Encoding NN as MILP

指向原始笔记的链接

- Affine layer: direct.

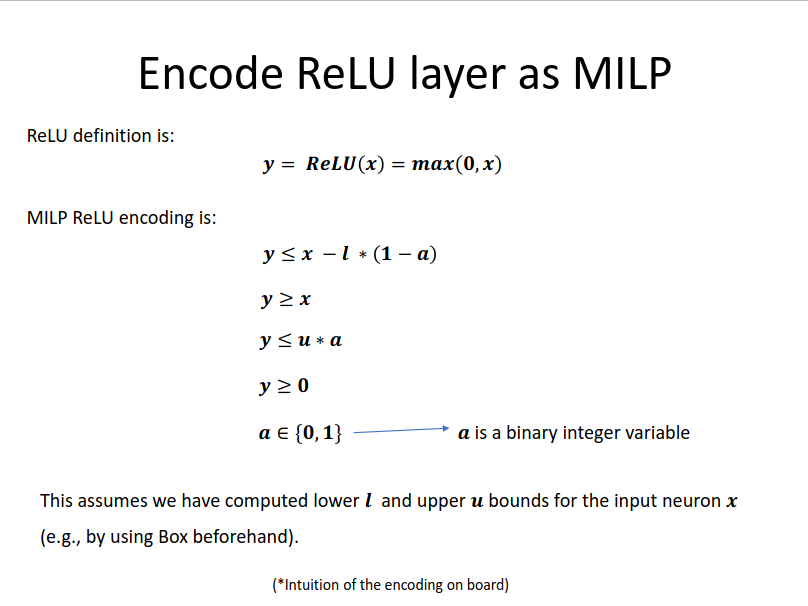

- ReLU layer:

- Pre-condition: Normally box constraints like

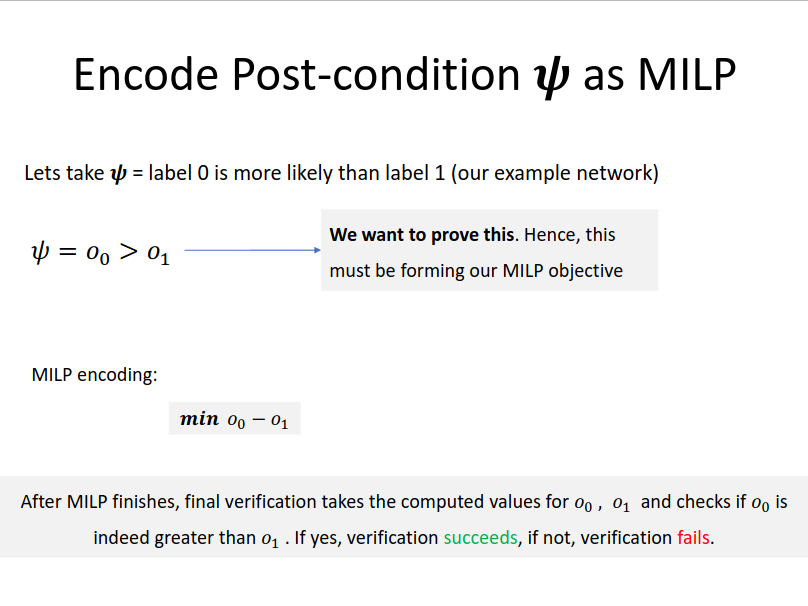

- Post-condition: